Normalize talking to people

We're done apologizing.

Last week someone got in touch about attending the Qual360 conference in DC this spring and they were offering a discount, so I took a look. After scrolling through the program and the featured speakers, I was left wondering why on earth anyone would still be spending a thousand dollars to go to a conference that could literally have been held any time between, say, 2007 and now. The conversation about qualitative research simply hasn’t evolved — the central question among our colleagues still seems to be, “how best to make the case for qualitative research”? I think that question is the wrong one.

After over 15 years in this industry, the panel and talk titles just start to repeat — how to sell it to the team, how to sell it to clients, how to add it quant and analytics, why it matters in an era of big data, how to make it more creative/collaborative/democratic/scalable. With the tenuous exception of a panel about whether you even need face to face qualitative research (spoiler, yeah you do sometimes), any of these topics could have been pitched any time since I first started doing focus groups almost two decades ago.

These topics hint at what the industry thinks are the reasons clients don’t buy qualitative research and the apologies and excuses and ‘fixes’ we have to make to get them to buy it anyway. The reasons are all too familiar — it costs too much, it takes too long, the sample is either too small or not representative enough, people lie and numbers don’t. The first two complaints lead those trying to innovate in our industry to the same inevitable solutions - automation and machine learning. The last two complaints simply lead the client to spend more on quantitative research, analytics, and ‘big data’ and skip the qual altogether.

Too often these complaints are taken at face value and assumed to simply mean there’s something wrong — or at least not quite right — with qualitative research. But here’s the thing — there’s nothing inherently wrong with qualitative research as a set of tools. It might not be the right set of tools for the job, but that doesn’t make it wrong in all cases. A lot of times, it’s the perfect toolkit, or it’s the perfect way to hone the other tools you’re using.

I learned this in consumer products research: when someone says they think something is too expensive, sometimes it means they literally can’t afford it, but most of the time, they mean it’s not worth it to them; when someone says they don’t have time to do a thing, sometimes it means they literally don’t have any more available time, but most of the time, it means they just don’t want to do the thing.

The Real Problem with Qualitative Research

We’re letting cost and timing distract us from the real issue: some clients don’t believe they can rely on qualitative research to make a big decision. More sophisticated clients might question the sample size and representativeness — and that’s how you know they’re afraid to stick their necks out for just a few customers or prospects.

So if this question of “can I rely on it” is the real obstacle to using qualitative research methods, then there are some ways to address it that have nothing to do with speed or cost.

First — work to ensure your sample is truly representative (we’re working on this from a variety of angles, especially through our project to build an inclusive and accessible participant research panel).

Second — check to make sure you’re doing enough research. With all due respect to Erika Hall and her book Just Enough Research (which I recommend and buy for people all the time), I think the title has led some to mistakenly conclude that it means the best way to do qualitative research is to do the least amount you can get away with. And that maybe kinda works when you’ve got a homogeneous audience — but I’d hazard a guess that most brands and products really don’t, so… what you might think is “just enough” research is probably not enough.

Third — check if it’s really about the method of data collection, or is it about how hard it is for the client to do anything with the results? We constantly hear people say, “the data will tell us what to do” - and we constantly remind them that the data will do no such thing. They, with our help, will have to interpret the data; and they, with our help, will have to apply the interpretation of the data to their business problems; and they, with our help, will need to use that process of analysis and interpretation and application to determine what they should do next. Note I said ‘should’ not ‘could’. Strategists and consultants using qualitative methods, just like their quantitative brethren, need to start taking more of a stand — to not just identify opportunities but to prioritize them. All of this — the analysis, interpretation, application, and prioritization — are the most important places where qual could be ‘better’, and interestingly, they happen after the data has been collected.

Change the Conversation

So instead of ‘making the case’ for qualitative research as a methodology, finding ways to be more like quant or more like analytics, the more interesting problems for the qualitative research industry to solve are:

- Delivery & Actionability. This is a question everyone in business intelligence/data collection gets from customers and clients: What can or should I DO with this information? Instead of inimitable stories about uncovering some surprising nugget from the research, talk about how the research inspired a great idea that actually got made. Instead of talking about how qual makes quant better, talk about how it makes business decision making better.

- Recruitment & Training. Qualitative research is an apprenticeship trade for most of us in the market or design research fields. Meanwhile, most social scientists are trained more in quant than qual methods. But we learn preferred methods of research and modes of analysis from our mentors, and if we stay in one place too long we may never learn the other methods. If we work for researchers who don’t believe in making strong recommendations, we may never learn how to be good consultants. Being a good qualitative researcher is one part methodological design + one part fieldwork aptitude + one part interpretation and analysis + at least one part consulting and collaboration with clients. How do we get better at the full cycle of the practice — not just data collection and coding?

I’m just plain tired of apologizing for the methods we use to collect data, the time it takes or its cost. Qualitative research is always simply an instrument we use to understand people and culture and behavior and mental models and so on — and the reason we want to understand those things is to help our clients make better decisions about who they serve and how best to serve them. I’d like to see less, well, groveling, and instead see the industry become more assertive and more opinionated about what our clients should do with the research.

Anyway — until the industry starts really getting into the thick of far more interesting topics, I’m not going to the conference. I’ve heard — and said, and done — it all before.

Stuff that caught our attention

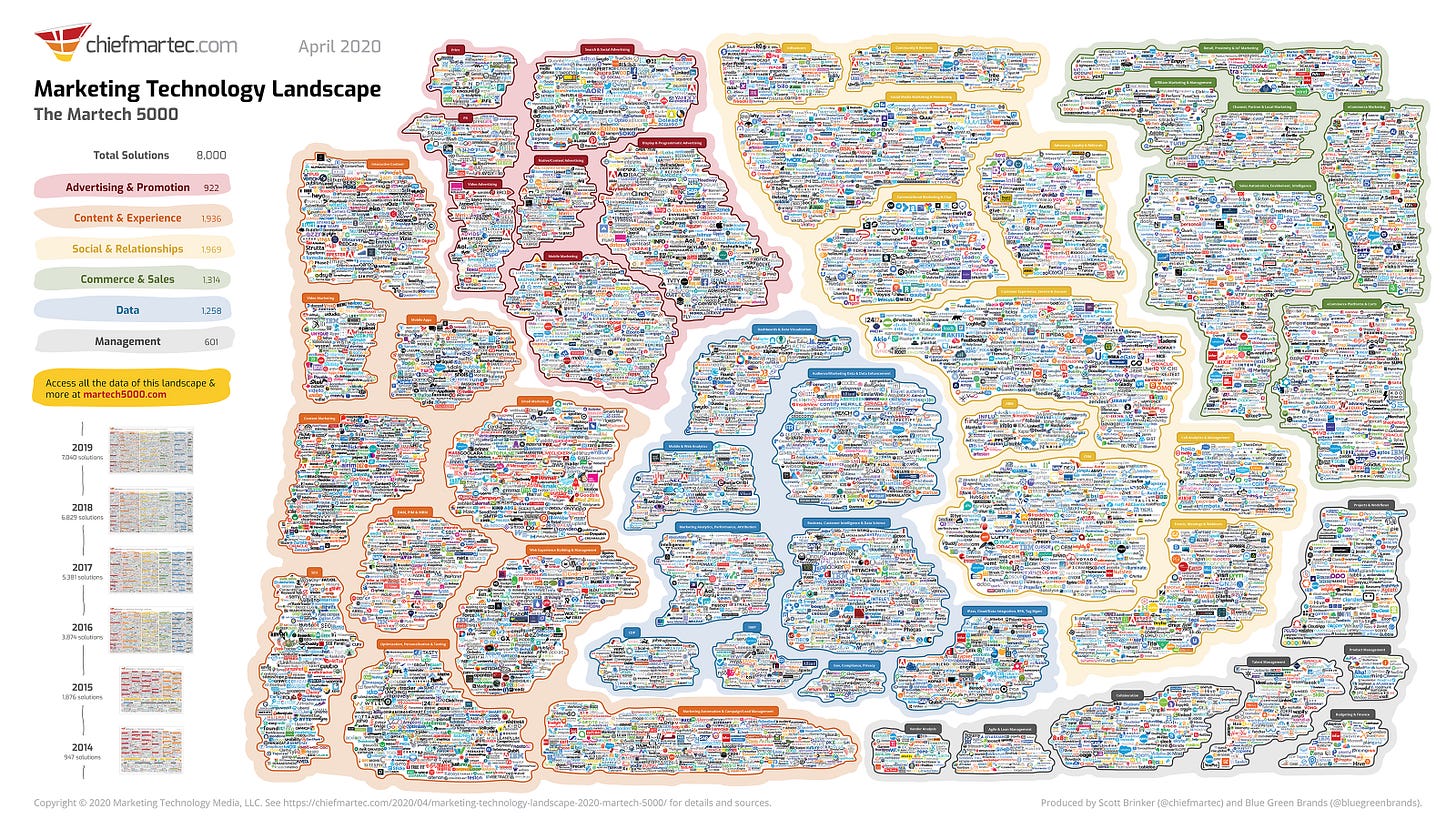

I’m still working my way though this excellent interview between Charlie Warzel and Shoshana Wodinsky about the martech ecosystem and how it is… bad. (Also, it always has been — I think back fondly on the old ad trading desk schemes from the late aughts, which no doubt continue to occur without much regulator interest.)

Okay, that’s enough from me, let’s see what the team is thinking about.

Here’s something kind of fascinating from Nic.

On first read, the title of this article (“Early concepts of intimacy: Young humans use saliva sharing to infer close relationships”) immediately made me recoil and begin listing out reasons I should just flat out deny it. “How could spit sharing possibly be useful, or even plausibly occur regularly enough for humans to develop any meaningful relationships?” However, I really enjoyed the larger message this piece poses: relationships between humans are complicated and may develop in surprising ways.

And from Ashley:

This article from AdWeek covers some of the most accessible brands of 2021. One of the featured ads is from Google and their “A CODA Story” (video above). Of this ad, Josh Loebner, executive director of inclusion and accessibility at Designsensory says that “Google’s proficiency with this kind of authentic storytelling comes not only from an inclusive creative team, but also from its willingness to listen to disabled communities while including them in all facets of the creative process...They’re conducting focus groups. They are wanting to hear authentic stories and to portray those in a way that includes people with disabilities as opposed to just bolting on the disability story in a way that seems disingenuous. They’re bringing people with disabilities to the table as consultants, as members of their team and within their research.”

Co-creation is not a new concept. In fact, it’s one we’ve been talking about for so many years, but inclusion of those with disabilities as a part of the research before creative development really starts is the key. This is where you can get insight that inspires more authentic creative ideas.

Still — while I’m thrilled brands are being more accessible, at what point will accessibility be the norm and not a thing to be praised for?

Amen to that.